Warehouse Computer Vision: From Research Lab to Pack Station

Five years ago, “computer vision in the warehouse” meant a research paper, a controlled demo, and a vague timeline. The models were too large. The hardware was too expensive. Accuracy was too low for anything touching a live customer order. Today, computer vision runs on production pack stations processing millions of orders. The technology crossed a threshold, and most of the industry hasn’t caught up to what that means.

This is the story of what changed and where computer vision actually works in warehouse operations right now.

The research lab era

Computer vision research has existed for decades. Object recognition, image classification, semantic segmentation — academic labs at Stanford, MIT, and CMU kept publishing breakthroughs. But there was a persistent gap between “works in a lab” and “works on a warehouse floor at 2 AM during peak season.”

Labs are controlled environments. Consistent lighting. Clean backgrounds. Known object sets. Unlimited processing time. A model that takes 3 seconds to classify an image is fine in a lab. On a pack station processing 400 orders per shift, 3 seconds per image is a non-starter.

Warehouse environments are the opposite. Lighting varies by zone and time of day. Backgrounds are cluttered. Products come in thousands of variations. Workers move fast and don’t pose items for the camera. Any system that can’t keep up with the pace of the operation gets turned off.

For most of computer vision’s history, the gap between lab accuracy and production accuracy was too wide. Models that performed well on curated datasets fell apart against real-world variability. The technology existed. The operational readiness didn’t.

Three things that changed

The shift from research lab to production floor didn’t happen because of one breakthrough. Three parallel developments converged to make warehouse computer vision practical.

Edge computing got smaller, cheaper, and faster

Early computer vision systems required expensive GPU servers. Running inference on a neural network meant sending data to a server room (or the cloud), processing it, and sending the result back. The latency killed real-time verification. The cost killed per-station deployment.

Edge computing changed the equation. Purpose-built inference hardware can now run complex vision models directly at the pack station. No round trip to the cloud. No dependency on internet connectivity. Processing happens in milliseconds, right where the work happens.

This matters more than it sounds. A 3PL running 50 stations doesn’t want to route 50 live video feeds to a central server. They want each station to process its own data locally, with results showing up instantly. Edge computing makes that architecture work at a cost that scales linearly with station count.

Models got smaller without getting dumber

The first generation of production-capable vision models were massive. Hundreds of millions of parameters. They needed powerful GPUs and consumed significant energy. Running them at the edge was impractical.

Model compression, knowledge distillation, and architectural changes (efficient attention mechanisms, optimized convolutional networks) produced models that are a fraction of the size with comparable accuracy. A model that required an enterprise GPU in 2020 now runs on an edge device that fits under a pack station table.

Smaller models also mean faster inference. Instead of one frame every few seconds, modern models process multiple frames per second. That speed matters when a packer isn’t going to wait for the system to catch up.

Training data reached critical mass

Vision models need data. Lots of it. Early warehouse computer vision projects struggled because there wasn’t enough labeled warehouse imagery to train accurate models. Every new product, every new packaging configuration, every new station layout required additional training data.

Two things solved this. First, synthetic data generation: you can now generate training images of products in various orientations, lighting conditions, and backgrounds without physically photographing every permutation. Second, production deployments generate their own data. Once a system is running on pack stations processing thousands of orders per day, it creates the training data that makes the models better. Rabot’s platform has generated billions of frames of visual data across customer deployments, continuously improving model accuracy from real production environments.

This creates a flywheel. More deployments generate more data. More data improves model accuracy. Better accuracy drives more deployments. The early movers in warehouse computer vision have years of production data that new entrants can’t replicate. That head start compounds.

Where computer vision works in warehouses today

Computer vision isn’t a single technology. It’s a set of capabilities that apply differently to different warehouse processes. Here’s where it’s actually working in production, not in pilots or demos.

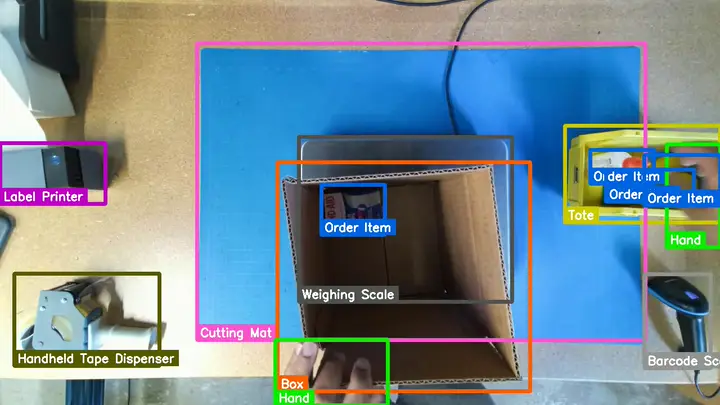

Pack station verification

This is the most mature and widely deployed use case. Cameras at the pack station capture the packing process and verify that the correct items, quantities, and packaging materials are in each order before it ships.

This works because the pack station is a constrained environment. The camera has a known field of view. The items appear in a predictable area (the box or the station surface). The system knows what should be there (from the order manifest). This combination of constraints makes the vision problem tractable without requiring general-purpose object recognition across millions of SKUs.

Rabot’s deployment at Staci Americas processes 25,000 orders per day across 19 stations. These are production numbers, not pilot results.

Quality inspection

Visual inspection of products for defects, damage, or compliance issues. Does the label look right? Is the retail packaging intact? Is there visible damage that should prevent the item from shipping?

Computer vision can perform these checks at production speed without adding a manual inspection step. The camera catches what the packer might miss when they’re focused on speed.

Performance measurement

Measuring how long each packing operation takes, identifying idle time, tracking throughput by station and operator. Computer vision can measure these metrics passively from the same cameras used for verification, without requiring packers to clock tasks or log activities.

Self-reported productivity data is unreliable. Packers round numbers, forget to log, or game the system. Camera-based measurement captures what actually happens.

Evidence and documentation

Every packed order gets a searchable visual record. When a dispute arises weeks after shipment, you can pull up exactly what was packed, how it was packed, and when. Operators using order-specific visual evidence consistently cut investigation time from hours of scrubbing through general CCTV footage to minutes.

Where computer vision doesn’t work (yet)

Being honest about limitations matters more than overselling capabilities.

General inventory recognition across massive catalogs. Recognizing any one of 500,000 SKUs from any angle in any lighting condition is still extremely difficult. Systems that work at the pack station (where the set of expected items is constrained to the order manifest) wouldn’t work if asked to identify arbitrary products from a massive catalog.

Transparent or highly reflective items. Glass bottles, clear plastic packaging, and reflective surfaces challenge current models. The visual features are harder to extract when the product reflects its environment rather than presenting a consistent appearance.

Extremely similar products. Products that differ only in text on the label (same shape, same color, different flavor) can be difficult for vision models. This is improving rapidly as models get better at reading text (OCR integrated with vision), but it’s still a harder case than products with visually distinct packaging.

Fully occluded items. If an item is inside opaque, featureless packaging that gives no external visual indication of what’s inside, vision can’t verify it. This is a physics problem, not a technology problem. You can’t see through cardboard.

The USB camera approach

One of the bigger shifts in warehouse computer vision has been the move away from expensive, specialized cameras toward standard USB and IP cameras.

Early systems required industrial-grade cameras with specific lenses, precise mounting requirements, and proprietary interfaces. These cameras cost hundreds or thousands of dollars each. Installation required a technician. Every station was a capital project.

Modern systems work with standard USB cameras that cost under $100. The intelligence isn’t in the camera. It’s in the software running on the edge device. This means deployment at a new station takes minutes instead of days, and scaling from 5 stations to 50 isn’t a capital expenditure discussion.

Rabot’s setup works this way: standard cameras, edge processing hardware, and cloud-based model management. The hardware at each station is simple and cheap. The AI runs on edge devices or in the cloud, depending on your latency requirements.

What’s coming next

Computer vision in warehouses is still early. The pack station applications are the first wave. Here’s where the technology is heading.

Receiving and putaway. Verifying inbound shipments at the dock door — confirming that what arrived matches the ASN without manually counting every case.

Picking verification. Extending visual verification from the pack station back to the pick face, confirming the right item was pulled from the right location using cameras at the pick zone.

Autonomous quality gates. Fully automated inspection points with no human involvement. Products move through a camera array, get inspected, and route to pack or to exception handling.

Predictive error prevention. Using visual data patterns to predict errors before they happen. A packer who’s been rushing for 30 minutes has a higher error probability than one working at a steady pace. Vision can detect these patterns and trigger proactive interventions.

Cross-facility model sharing. Models trained at one facility working immediately at a new site without retraining. Transfer learning and domain adaptation are making this practical, cutting deployment time for new locations.

The practical takeaway

Computer vision moved from research lab to warehouse floor because hardware got cheaper, models got smaller, and training data got richer. The pack station was the first production use case because it pairs a constrained environment with high economic impact. One camera, one station, one edge device — and every order gets verified with a visual record.

Rabot is running across production deployments and integrates with the major WMS platforms already on the floor. The technology works. The question for you isn’t whether computer vision is ready. It’s whether you can afford to keep packing without it while errors pile up every day, week, and shift.